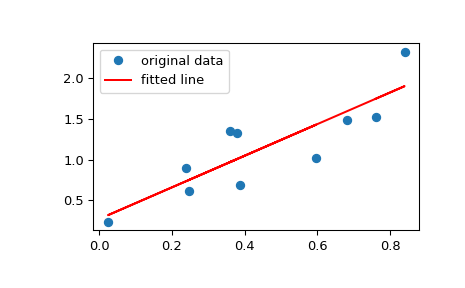

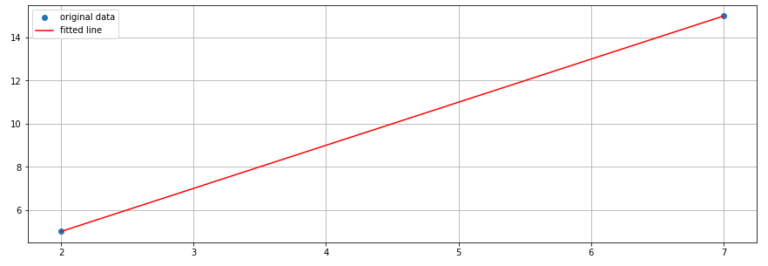

The first value of feature x, x1 corresponds to the first value of feature y, y1. Let's say you have a dataset with two features, x and y.Įach of these features has n values, meaning that x and y are n tuples. If the relationship between the two variables is found to be closer to a linear function, then they have a stronger linear correlation and the absolute value of the correlation coefficient is higher. The purpose of linear correlation is to measure the proximity of a mathematical relationship between the variables of a dataset to a linear function. To calculate the three coefficients that we mentioned earlier, you can call the following functions:įirst, we import numpy and the scipy.stats module from SciPy. SciPy has a module called scipy.stats that comes with many routines for statistics.

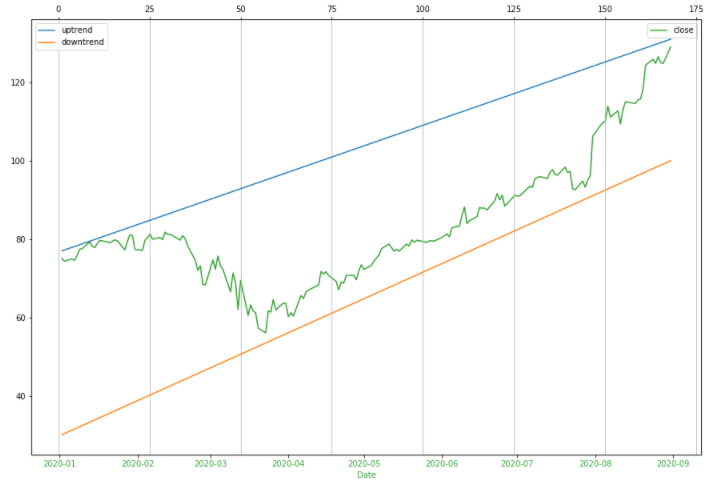

The two values are equal and they denote the pearson correlation coefficient for variables x and y. However, the lower left and the upper right values are of the most signicance and you will need them frequently. The value on the lower right is the correlation coefficient for y and y. The value on the upper left is the correlation coefficient for x and x. If you've observed keenly, you must have noticed that the values on the main diagonal, that is, upper left and lower right, equal to 1. The correlation matrix is a two-dimensional array showing the correlation coefficients. Now, type corr on the Python terminal to see the generated correlation matrix: To use the NumPy library, we should first import it as shown below: NumPy comes with many statistics functions.Īn example is the np.corrcoef() function that gives a matrix of Pearson correlation coefficients. If you need to visualize the results, you can use Matplotlib. The SciPy, NumPy, and Pandas libraries come with numerous correlation functions that you can use to calculate these coefficients. The Pearson's coefficient helps to measure linear correlation, while the Kendal and Spearman coeffients helps compare the ranks of data. In this article, we will be focussing on the three major correlation coefficients. When plotted, the values of y tend to increase with an increase in the values of x, showing a strong correlation between the two.Ĭorrelation goes hand-in-hand with other statistical quantities like the mean, variance, standard deviation, and covariance. Positive correlation- In this type of correlation, large values for one feature correspond to large values for another feature. The reason is that the correlation between the two variables is weak. Weak or no correlation- In this type of correlation, there is no observable association between two features.

If you plot this relationship on a cartesian plane, the y values will increase as the x values decrease. The vice versa is also true, that small values of one feature correspond to large features of another feature.

If you plot this relationship on a cartesian plane, the y values will decrease as the x values increase. Negative correlation- This is a type of correlation in which large values of one feature correspond to small values of another feature. Once data is organized in the form of a table, the rows of the table become the observations while the columns become the features or the attributes. It will be better in statistics and data science to determine the undelying relationship between variables.Ĭorrelation is the measure of how two variables are strongly related to each other. One variable may be dependent on the values of another variable, two variables may be dependent on a third unknown variable, etc. The variables within a dataset may be related in different ways. Rank Correlation Implementation in Pandas.Rank Correlation Implementation in NumPy and SciPy.You can skip to a specific section of this Python correlation statistics tutorial using the table of contents below:

#SCIPY STATS LINREGRESS HOW TO#

In this article, I will help you know how to use SciPy, Numpy, and Pandas libraries in Python to calculate correlation coefficients between variables. Python provides its users with tools that they can use to calculate these statistics. Such statistics can be used in science and technology. Other than discovering the relationships between the variables, it is important to quantify the degree to which they depend on each other. When dealing with data, it's important to establish the unknown relationships between various variables.